2.4: Complex Variable, Stationary Path Integrals

- Page ID

- 1651

Analytic Functions

Suppose we have a complex function \(f = u + iv\) of a complex variable \(z = x + iy\), defined in some region of the complex plane, where \(u\), \(v\), \(x\), \(y\) are real. That is to say, \[ f(z) = u(x,y) + i v(x,y), \label{2.4.1}\]

with \(u(x,y)\) and \(v(x,y)\) real functions in the plane.

We now assume that in this region \(f(z)\) is differentiable, that is to say, \[ \dfrac{df(z)}{dz} = \lim _{\Delta z \rightarrow 0} \dfrac{f(z + \Delta z)-f(z)}{\Delta z} \label{2.4.2}\]

is well-defined. What does this tell us about the functions \(u(x,y)\) and \(v(x,y)\), the real and imaginary parts of \(f(z)\)?

In fact, the property of differentiability for a function of a complex variable tells us a lot! It does not just mean that the function is reasonably smooth. The crucial difference from a function of a real variable is that \(\Delta z\) can approach zero from any direction in the complex plane, and the limit in these different directions must be the same. Of course, there are only two independent directions, so what we are really saying is \[ \frac{\partial f(x+iy)}{\partial x}=\frac{\partial f(x+iy)}{\partial (iy)}, \label{2.4.3}\]

which we can write in terms of \(u,\; v\): \[ \frac{\partial u(x,y)}{\partial x}+i\frac{\partial v(x,y)}{\partial x}=\frac{\partial u(x,y)}{\partial (iy)}+i\frac{\partial v(x,y)}{\partial (iy)}. \label{2.4.4}\]

Equating real and imaginary parts of this equation we find: \[ \frac{\partial u}{\partial x}=\frac{\partial v}{\partial y}, \;\; \frac{\partial v}{\partial x}=-\frac{\partial u}{\partial y}. \label{2.4.5}\]

These are called the Cauchy-Riemann equations.

It immediately follows that both \(u(x,y)\) and \(v(x,y)\) must satisfy the two-dimensional Laplacian equation, \[ \frac{\partial^2 u(x,y)}{\partial x^2}+\frac{\partial^2 u(x,y)}{\partial y^2}=0, \label{2.4.6}\]

that is, \[ \nabla^2u=0\;\; and\;\; \nabla^2v=0. \label{2.4.7}\]

Notice that this implies (just as for an electrostatic potential) that \(u(x,y)\) cannot have an absolute minimum or maximum inside the region of analyticity. If \(df(z)/dz = 0\), but the second-order partial derivatives are nonzero, then they must have opposite sign, signaling a saddlepoint. In the general case, a two-dimensional version of Gauss’ theorem can be used to show there is no local extremum.

Furthermore, \[ \nabla u\cdot\nabla v=\left( \frac{\partial u}{\partial x},\frac{\partial u}{\partial y}\right) \cdot\left( \frac{\partial v}{\partial x},\frac{\partial v}{\partial y} \right) =0. \label{2.4.8}\]

That is to say, the contour lines of constant \(u(x,y)\) are everywhere orthogonal to the contour lines of constant \(v(x,y)\). (The gradient being orthogonal to the contour lines everywhere.) The important point is that just requiring differentiability of a function of a complex variable imposes a strong constraint on its real and imaginary parts, the functions \(u(x,y)\) and \(v(x,y)\).

Example \(\PageIndex{1}\)

A Simple Example: \(f (z) = z^2\).

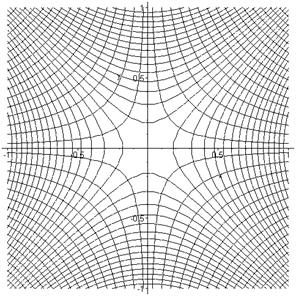

It is worthwhile building a clear picture of the real and imaginary parts of the function \(z^2\). The real part is \(x^2-y^2\), and its contour lines in the square -1 to 1 are shown below. The darker shades are the lower ground. At the origin, there is a saddlepoint with higher ground in both directions of the real axis, lower ground in the pure imaginary directions. The lines \(x = y\), \(x=-y\) (not shown) are contours at the same level (zero) as the origin.

What about the imaginary part? \(Im\, z^2=2xy\) has contours:

Putting the two sets of contour lines on the same diagram it is clear that they always cut each other orthogonally:

(Incidentally, this picture has a physical realization. It represents the field lines and equipotentials of a quadrupole magnet, used for focusing beams of charged particles.)

Example \(\PageIndex{2}\)

\(f(z) = 1/z\)

The definition of differentiation above can be used to show that \[ \frac{d}{dz}\frac{1}{z}=-\frac{1}{z^2} \label{2.4.9}\]

just as for a real variable, so the function can be differentiated everywhere in the complex plane except at the origin. The singularity at the origin is termed a “pole”, for obvious reasons.

Contour Integration: Cauchy’s Theorem

Cauchy’s theorem states that the integral of a function of a complex variable around a closed contour in the complex plane is zero if the function is analytic in the region enclosed by the contour.

This theorem can be proved at various levels of rigor, we shall give a basic physicist’s proof using Stokes theorem, that the integral of a vector function around a contour (now in ordinary, not complex, space) is equal to the integral of the curl of that function over an area spanning the contour, provided of course the curl is well-defined everywhere on the area,

\[ \oint \vec P\cdot\vec {ds}=\int curl\,\vec P\cdot d\vec A. \label{2.4.10}\]

Taking the special case where the contour and the area are confined to the x, y plane, and writing \(\vec P=(P,Q)\), \(curl\,\vec P=(\partial Q/\partial x-\partial P/\partial y)\), and Stokes’ theorem becomes:

\[ \oint(Pdx+Qdy)=\iint \left( \frac{\partial Q}{\partial x}-\frac{\partial P}{\partial y}\right) dxdy, \label{2.4.11}\]

known in this form as Green’s theorem (and easy to prove: the two terms are separately equal,

\[\oint Qdy=\iint(\partial Q/\partial x)dxdy.\]

This can be established by dividing the area into strips of infinitesimal width \(dy\) parallel to the x-axis, integrating with respect to \(x\) within a strip, to give \(\int_{strip}(\partial Q/\partial x)dx=Q(x_2,y_1)-Q(x_1,y_1)\), the co-ordinates of the points on the contour at the ends of the strip, then adding the contributions from all the parallel strips just gives the integral around the contour.)

Now back to the complex plane: write as before \[ z=x+iy, \;\; f(z)=u(x,y)+iv(x,y) \label{2.4.12}\]

from which

\[ \oint f(z)dz=\oint(u+iv)(dx+idy)=\oint(udx-vdy)+i\oint(vdx+udy). \label{2.4.13}\]

Now apply Green’s theorem to the two integrals on the right, replacing \(P,\; Q\) with first \(u,\; -v\) then with \(v,\; u\). This gives:

\[ \iint \left( -\frac{\partial v}{\partial x}-\frac{\partial u}{\partial y}\right) dxdy+i\int \int \left( \frac{\partial u}{\partial x}-\frac{\partial v}{\partial y}\right) dxdy \label{2.4.14}\]

and both these integrals are identically zero from the Cauchy-Riemann equations.

So the integral of a function of a complex variable around a closed contour in the complex plane can only depend on nonanalytic behavior inside the contour. Consider for example a pole, take the simplest case of integrating \(1/z\) around the unit circle, the conventional direction is counterclockwise. Then \(1/z=e^{-i\theta}\), \(dz=ie^{i\theta}d\theta\), \[ \oint \frac{dz}{z}=\oint id\theta=2\pi i. \label{2.4.15}\]

This is called the residue at the pole.

Moving the Contour of Integration

Cauchy’s theorem has a very important consequence: for an integral from, say, \(z_a\) to \(z_b\) in the complex plane, moving the contour in a region where the function is analytic will not affect the result, because the difference between the integral over the original contour and that over the shifted contour is an integral around a closed circuit, and therefore zero, provided the function is analytic in the region enclosed.

For an integral around a closed contour, if the only singularities enclosed by the contour are poles, the contour may be shrunk and broken to become a sum of separate small contours, one around each pole, then the integral around the original contour is the sum of the residues at the poles.

Other Singularities: Cuts, Sheets, etc.

Poles are of course not the only possible singularities. For example, \(\log z\) has a singularity at the origin. Now, \(\log z=\log re^{i\theta}=\log r+i\theta\). The singularity at the origin is from the \(\log r\) term, but notice that if we go around the unit circle, \(\theta\) increases by \(2\pi\), and if we go around again it increases by a further \(2\pi\). This means that the value of \(\log z\) is not uniquely defined: any given point in the complex plane has values differing by \(2n\pi i\), \(n\) any integer. This is handled by replacing the single complex plane with a pile of sheets, and a cut going out from the origin. To find \(\log z\), you need to know not only \(z\), but also which sheet you’re on: going up one sheet means \(\log z\) has increased by \(2\pi i\). When you cross the cut, you go to the next sheet, like a multilevel parking garage. The cut can go out from the origin in any direction, the standard arrangement is along the real axis, either positive or negative.

The square root function similarly has a cut, but only two sheets.

Evaluating Rapidly Oscillating Integrals by Steepest Descent

How to evaluate \(\int_{-\infty}^{\infty} e^{iax^2}dx\) in an unambiguous fashion: an introduction to moving the contour of integration and the Method of Steepest Descent.

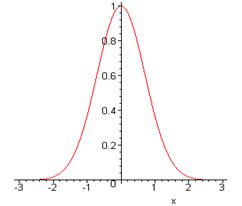

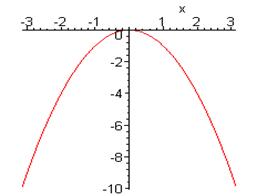

The familiar Gaussian integral \(\int_{-\infty}^{\infty} e^{-ax^2}dx=\sqrt{\frac{\pi}{a}}\) is easy to understand. Plotting the integrand, (here for \(a=1\)) there is a peak of height 1 and width of order \(1/\sqrt{a}\).

But what about the result \(\int_{-\infty}^{\infty} e^{-iax^2}dx=\sqrt{\frac{\pi}{ia}}\)? This (correct) result is far less obvious! Here the integrand \(e^{-iax^2}\) is always on the unit circle in the complex plane, and equal to 1 at \(x=0\). It is instructive to plot the phase of the integrand \(\varphi(x)=-ax^2\) as a function of \(x\) (taking \(a = 1\) in the graph below).

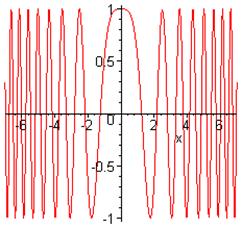

The phase is stationary at the origin, so contributions from that region add coherently. To help visualize the integrand better, here’s a plot of the real part:

It is evident that almost all the contribution to the integral comes from the central region where the phase is stationary, the increasingly rapid oscillations away from the origin ensuring that very little comes from elsewhere.

So how do we actually evaluate the integral? In the complex plane \(z=x+iy\), we can write

\[ I=\int_{-\infty}^{\infty} e^{-iaz^2}dz\;\; (along\: real\: axis)\; =\int_{-\infty}^{\infty} e^{-ia(x^2-y^2+2ixy)}dz. \label{2.4.16}\]

Notice that the amplitude (or modulus) of the integrand \(e^{2axy}=1\) on the real axis, so it does not go to zero at infinity, although there are essentially no contributions from large \(x\) because of the rapid oscillations.

A cleaner way to see what’s going on is to rotate the contour of integration around the origin to the 45 degree line \(x=-y\). It’s safe to do this because the amplitude of the integrand decreases on going from the real axis into the region \(xy < 0\), and in fact tends to zero on going to infinity in that region.

(To give a more precise argument, suppose we replace the infinite integral by one from \(-L\) to \(L\), so we will be taking the limit of \(L\) going to infinity at the end. Then the distorted contour has first a vertical part, from \((-L,0)\) to \((-L,L)\) then the diagonal contour from \((-L, L)\) to \((L, -L)\), finally another vertical leg from \((L,-L)\) to \((L, 0)\). Now, on the first vertical part, the integral is clearly less than the integral of the modulus, that is,

\[ \int_{(-L,0)}^{(-L,L)} e^{-ia(x^2-y^2+2ixy)}dz<\int_{0}^{L} e^{-2aLy}dy<\int_{0}^{\infty} e^{-2aLy}dy=1/2aL \label{2.4.17}\]

so in the limit of the original integral being over the whole real axis, the contributions from the vertical parts of the contour vanish.

The integral becomes \(\int_{-\infty}^{\infty} e^{-2ax^2}dz\) with \(dz=\sqrt{2}e^{-i\pi/4}dx\), so \[ I=\sqrt{2}e^{-i\pi/4}\int_{-\infty}^{\infty} e^{-2ax^2}dx=\sqrt{2}e^{-i\pi/4}\sqrt{\frac{\pi}{2a}}=\sqrt{\frac{\pi}{ia}}, \label{2.4.18}\]

the required result.

General Steepest Descent Method

In fact, the contour rotation trick used above to make the integral easier to evaluate is a particular case of a method having wide applicability in evaluating contour integrals of the form \(\int e^{iaf(z)}dz\). The basic strategy is to distort the contour of integration in the complex z-plane so that the amplitude of the integrand is as small as possible over as much of the contour of integration as possible. Actually, that is exactly what we did in the example above. To see this, it is helpful to plot the contour lines (lines of constant value) of the modulus of the integrand, \(|e^{-iax^2}|=e^{2axy}\).

The convention here is white for the high ground, black for the valleys.

We want to keep the contour of integration as low as possible for as long as possible. The map above is of a “saddlepoint”: hills rise to the northeast and the southwest of the origin, valleys fall away to the northwest and the southeast. The strategy is to stay in the valleys (small integrand) as much as possible—however, to get from \(-\infty\) to \(+\infty\) we have to get from one valley to the other, and that means going over the saddlepoint at the origin. Obviously, to get the integrand as small as possible at all stages in the integration we must go down from the saddlepoint in both directions by the steepest possible route, and it is evident that this is right down the center of the valley, just the contour we chose above. Note that this steepest descent path is also one of stationary phase. This is because for any analytic function of a complex variable \(f(z)\), the lines of constant \(Re\, f(z)\) are perpendicular to those of constant \(Im\, f(z)\). For a function \(e^{f(z)}\), the steepest descent line is perpendicular to the lines of constant \(Re\, f(z)\), and is therefore a line of constant \(Im\, f(z)\), that is, constant phase of \(e^{f(z)}\).

Saddlepoints of Analytic Functions

Suppose we have a function \(f(z)\) analytic in some region \(R\) of the complex plane, and at some point \(z_0\) inside \(R\) the derivative \(\frac{df(z)}{dz} = 0\). Then in the neighborhood of \(z_0\), \[ f(z) = f(z_o) + \dfrac{1}{2} f''(z_o)(z-z_o)^2 + ... \label{2.4.19}\]

Close enough to \(z_0\) we can neglect the higher order terms, and for the case of\(f''(z_o)\) real, the contour lines of the real and imaginary parts of \(f(z)\) will then be exactly those we have plotted for \(z^2\) above. For \(f''(z_o)\) complex, the plots will be rotated by an angle equal to the phase of \(f''(z_o)\). That is to say, for any analytic function, near any point where \(df(z)/dz = 0\), the real and imaginary parts of the function have saddlepoints with contour maps rotated versions of those above.

Integrating Through a Saddlepoint

We consider now integrals of the form \[\int_C e^{f(z)}dz \label{2.4.20}\]

where \(C\) is some path in a region where \(f(z)\) is analytic. This means the value of the integral will not be affected by distorting the path, provided it stays in the region of analyticity. (The path of integration is usually called the contour of integration—we’ll call it path here, to avoid confusion with our contours, which have the standard geographic meaning, joining points having the same value of some parameter.)

Note that with the exponential form of the integrand, the real part of \(f(z)\) determines the magnitude of the integrand, the imaginary part of \(f(z)\) determines its phase.

The strategy is to arrange the path of integration so that as much as possible of it is in the valleys, where the integrand is small, then to go over the saddlepoint by the steepest possible route, which would be staying on the imaginary axis in the case of \(z^2\) plotted above. It is important to note that this “steepest descent” route is also a path along which the imaginary part of \(f(z)\) remains constant, so the contributions along this path are all in phase, that is to say, they add coherently.

The bottom line is that by directing the path of integration through the saddlepoint along the steepest route for the magnitude of the integrand, the biggest contributions to the integral are all in phase. Along this path, the integral has standard Gaussian form. If the function f(z) is sufficiently large, it may be that the contribution of the integral away from the saddlepoint can be neglected. This method is therefore often valuable in cases where some parameter becomes large: we give a number of examples to clarify this point.

Saddlepoint Estimation of \(n!\)

We use the identity

\[ n!=\int_0^{\infty} t^ne^{-t}dt=\int_0^{\infty} e^{f(t)}dt \;\; with \;\; f(t)=n\ln t-t. \label{2.4.21}\]

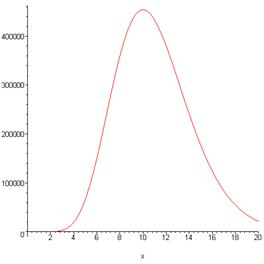

To picture \(t^ne^{-t}\), here it is for \(n=10\):

Note that

\[ f'(t)=\frac{n}{t}-1,\;\; f'=0\: for\: t=n, \;\; f''(t)=-\frac{n}{t^2}. \label{2.4.22}\]

Therefore, in the neighborhood of the maximum value of \(f(t)\) at \(t=n\), \[ f(t)=n\ln n-n-\frac{1}{2n}(t-n)^2+higher\, order\, terms. \label{2.4.23}\]

For integer \(n\), the function is analytic in any finite region of the complex plane. Taking \(n=10\), as in the real-axis graph above, and plotting the contours of \(Re\, t^ne^{-t}\) in the neighborhood of \(t=10\), we find:

It is clear that the integral along the real axis is in fact a steepest descent path. The reason we look at this straightforward case is to gain some experience about when it is reasonable to throw away all the contribution to the integral except that near the saddlepoint. If we simply take \[ f(t)=f(n)-\frac{1}{2n}(t-n)^2=n\ln n-n-\frac{1}{2n}(t-n)^2 \label{2.4.24}\]

and take the \(t\) integration to be over the whole real axis, not just positive \(t\), it is a Gaussian integral and \[ n!=\int_0^{\infty} e^{f(t)}dt \cong e^{f(n)}\int_0^{\infty} e^{-(1/2n)(t-n)^2}dt=\sqrt{2\pi n}n^n e^{-n}. \label{2.4.25}\]

More precise, and considerably more complicated, methods give the leading correction to this expression. It is down by a factor of \(1/12n\), so the naïve Gaussian saddlepoint result is accurate within 1% for \(n = 10\), and improves as \(n\) increases.

The Delta Function

Recall that the delta function can be defined by the limit of a Gaussian integral

\[ \delta(x)=\lim_{\Delta\to0}\frac{1}{(4\pi \Delta^2)^{1/2}}e^{-x^2/4\Delta^2}. \label{2.4.26}\]

It is easy to see how this leads to \[ \int f(x)\delta(x)dx=f(0) \label{2.4.27}\]

for an integral along the real axis with a function \(f(x)\) reasonably well-behaved near the origin. Shankar mentions that the definition also works even if \(\Delta^2\) is replaced by \(i\Delta^2\). In that case, the absolute value of the function is the same everywhere on the real axis, and increases as \(\Delta^{-1}\) on taking \(\Delta\) small. The reason it still works is that the phase oscillations are so rapid everywhere except at the origin, where the phase is momentarily stationary, so all the contribution comes from there.

However, it is easier to believe \[ \delta(x)=\lim_{\Delta\to0}\frac{1}{(4\pi i\Delta^2)^{1/2}}e^{-x^2/4i\Delta^2} \label{2.4.28}\]

on going into the complex plane. If we change variables from \(x\) to \(x\), where \(x^2=ix^2\), the integral again becomes a simple real Gaussian. But, regarding \(x\) as a complex variable, transforming to \(x\) is just equivalent to rotating the axes by \(\pi/4\), or multiplying by the square root of \(i\). The steepest descent route through the origin is now along the line at \(\pi/4\) to the real axis. So this is a perfectly good definition of the \(\delta\)-function provided we can distort the path of integration from the real axis to the line \(x = y\). (Strictly speaking, the path would now include two octants of a circle at very large \(R\)—their contribution vanishes in the limit of \(R\) going to infinity.)