5.1: The Basic Algorithm

- Page ID

- 34834

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)The best way to understand how Gaussian elimination works is to work through a concrete example. Consider the following \(N=3\) problem:

\[\begin{bmatrix}1 &2 &3 \\ 3 &2 &2 \\ 2 &6 &2\end{bmatrix} \begin{bmatrix} x_0 \\ x_1 \\ x_2 \end{bmatrix} = \begin{bmatrix}3 \\ 4 \\ 4\end{bmatrix}.\]

The Gaussian elimination algorithm consists of two distinct phases: row reduction and back-substitution.

5.1.1 Row Reduction

In the row reduction phase of the algorithm, we manipulate the matrix equation so that the matrix becomes upper triangular (i.e., all the entries below the diagonal are zero). To achieve this, we note that we can subtract one row from another row, without altering the solution. In fact, we can subtract any multiple of a row.

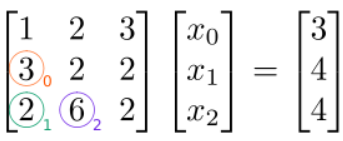

We will eliminate (zero out) the elements below the diagonal in a specific order: from top to bottom along each column, then from left to right for successive columns. For our \(3\times 3\) example, the elements that we intend to eliminate, and the order in which we will eliminate them, are indicated by the colored numbers \(0\), \(1\), and \(2\) in the following figure:

The first matrix element we want to eliminate is at \((1,0)\) (orange circle). To eliminate it, we subtract, from this row, a multiple of row \(0\). We will use a factor of \(3/1=3\):

\[(3x_0 + 2x_1 + 2x_2) - (3/1)(1x_0 + 2x_1 + 3x_2) = 4 - (3/1) 3\]

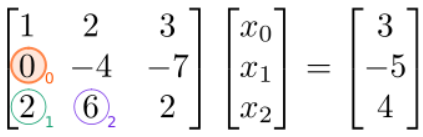

The factor of \(3\) we used is determined as follows: we divide the matrix element at \((1,0)\) (which is the one we intend to eliminate) by the element at \((0,0)\) (which is the one along the diagonal in the same column). As a result, the term proportional to \(x_{0}\) disappears, and we obtain the following modified linear equations, which possess the same solution:

(Note that we have changed the entry in the vector on the right-hand side as well, not just the matrix on the left-hand side!) Next, we eliminate the element at \((2,0)\) (green circle). To do this, we subtract, from this row, a multiple of row \(0\). The factor to use is \(2/1=2\), which is the element at \((2,0)\) divided by the \((0,0)\) (diagonal) element:

\[(2x_0 + 6x_1 + 2x_2) - (2/1)(1x_0 + 2x_1 + 3x_2) = 4 - (2/1) 3\]

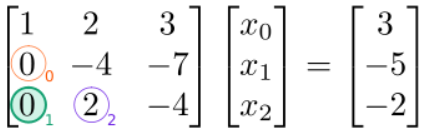

The result is

Next, we eliminate the \((2,1)\) element (blue circle). This element lies in column \(1\), so we eliminate it by subtracting a multiple of row \(1\). The factor to use is \(2/(-4)=-0.5\), which is the \((2,1)\) element divided by the \((1,1)\) (diagonal) element:

\[(0x_0 + 2x_1 - 4x_2) - (2/(-4))(0x_0 - 4x_1 - 7x_2) = -5 - (2/(-4)) (-2)\]

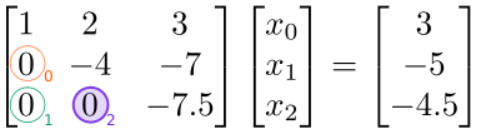

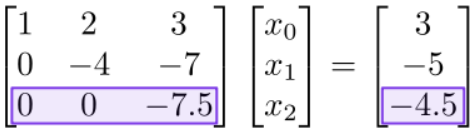

The result is

We have now completed the row reduction phase, since the matrix on the left-hand side is upper-triangular (i.e., all the entries below the diagonal have been set to zero).

5.1.2 Back-Substitution

In the back-substitution phase, we read off the solution from the bottom-most row to the top-most row. First, we examine the bottom row:

Thanks to row reduction, all the matrix elements on this row are zero except for the last one. Hence, we can read off the solution

\[x_{2}=(-4.5)/(-7.5)=0.6.\]

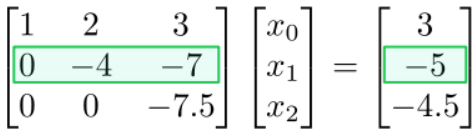

Next, we look at the row above:

This is an equation involving \(x_{1}\) and \(x_{2}\). But from the previous back-substitution step, we know \(x_{2}\). Hence, we can solve for

\[x_1 = [-5 - (-7) (0.6)] / (-4) = 0.2.\]

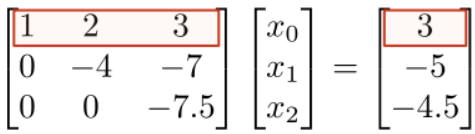

Finally, we look at the row above:

This involves all three variables \(x_{0}\), \(x_{1}\), and \(x_{2}\). But we already know \(x_{1}\) and \(x_{2}\), so we can read off the solution for \(x_{0}\). The final result is

\[\begin{bmatrix}x_0 \\ x_1 \\ x_2\end{bmatrix} = \begin{bmatrix}0.8 \\ 0.2 \\ 0.6\end{bmatrix}.\]

5.1.3 Runtime

Let's summarize the components of the Gaussian elimination algorithm, and analyze how many steps each part takes:

| Row reduction | ||||

|---|---|---|---|---|

| • | Step forwards through the rows. For each row \(n\), | \(N\) steps | ||

| • | Perform pivoting (to be discussed below). | \(N-n +1 \sim N\) steps | ||

| • | Step forwards through the rows larger than \(n\). For each such row \(m\), | \(N-n \sim N\) steps | ||

| • | Subtract \((A'_{mn}/A'_{nn})\) times row \(n\) from the row \(m\) (where \(\mathbf{A}'\) is the current matrix). This eliminates the matrix element at \((m,n)\). | \(O(N)\) arithmetic operations | ||

| Back-substitution | ||||

| • | Step backwards through the rows. For each row \(n\), | \(N\) steps | ||

| • | Substitute in the solutions \(x_{m}\) for \(m>n\) (which are already found). Hence, find \(x_{n}\). | \(N-n \sim O(N)\) arithmetic operations | ||

(The "pivoting" procedure hasn't been discussed yet; we'll do that in a later section.)

We conclude that the runtime of the row reduction phase scales as \(O(N^{3})\), and the runtime of the back-substitution phase scales as \(O(N^{2})\). The algorithm's overall runtime therefore scales as \(O(N^{3})\).