15.7: Statistical Interpretation of Entropy and the Second Law of Thermodynamics- The Underlying Explanation

( \newcommand{\kernel}{\mathrm{null}\,}\)

Learning Objectives

By the end of this section, you will be able to:

- Identify probabilities in entropy.

- Analyze statistical probabilities in entropic systems.

The various ways of formulating the second law of thermodynamics tell what happens rather than why it happens. Why should heat transfer occur only from hot to cold? Why should energy become ever less available to do work? Why should the universe become increasingly disorderly? The answer is that it is a matter of overwhelming probability. Disorder is simply vastly more likely than order.

When you watch an emerging rain storm begin to wet the ground, you will notice that the drops fall in a disorganized manner both in time and in space. Some fall close together, some far apart, but they never fall in straight, orderly rows. It is not impossible for rain to fall in an orderly pattern, just highly unlikely, because there are many more disorderly ways than orderly ones. To illustrate this fact, we will examine some random processes, starting with coin tosses.

Coin Tosses

What are the possible outcomes of tossing 5 coins? Each coin can land either heads or tails. On the large scale, we are concerned only with the total heads and tails and not with the order in which heads and tails appear. The following possibilities exist:

5heads,0tails

4heads,1tail

3heads,2tails

2heads,3tails

1head,4tails

0heads,5tails

These are what we call macrostates. A macrostate is an overall property of a system. It does not specify the details of the system, such as the order in which heads and tails occur or which coins are heads or tails.

Using this nomenclature, a system of 5 coins has the 6 possible macrostates just listed. Some macrostates are more likely to occur than others. For instance, there is only one way to get 5 heads, but there are several ways to get 3 heads and 2 tails, making the latter macrostate more probable. Table 15.7.1 lists of all the ways in which 5 coins can be tossed, taking into account the order in which heads and tails occur. Each sequence is called a microstate—a detailed description of every element of a system.

| Individual microstates | Number of microstates | |

|---|---|---|

| 5 heads, 0 tails | HHHHH | 1 |

| 4 heads, 1 tail | HHHHT, HHHTH, HHTHH, HTHHH, THHHH | 5 |

| 3 heads, 2 tails | HTHTH, THTHH, HTHHT, THHTH, THHHT HTHTH, THTHH, HTHHT, THHTH, THHHT | 10 |

| 2 heads, 3 tails | TTTHH, TTHHT, THHTT, HHTTT, TTHTH, THTHT, HTHTT, THTTH, HTTHT, HTTTH | 10 |

| 1 head, 4 tails | TTTTH, TTTHT, TTHTT, THTTT, HTTTT | 5 |

| 0 heads, 5 tails | TTTTT | 1 |

| Total: 32 |

The macrostate of 3 heads and 2 tails can be achieved in 10 ways and is thus 10 times more probable than the one having 5 heads. Not surprisingly, it is equally probable to have the reverse, 2 heads and 3 tails. Similarly, it is equally probable to get 5 tails as it is to get 5 heads. Note that all of these conclusions are based on the crucial assumption that each microstate is equally probable. With coin tosses, this requires that the coins not be asymmetric in a way that favors one side over the other, as with loaded dice. With any system, the assumption that all microstates are equally probable must be valid, or the analysis will be erroneous.

The two most orderly possibilities are 5 heads or 5 tails. (They are more structured than the others.) They are also the least likely, only 2 out of 32 possibilities. The most disorderly possibilities are 3 heads and 2 tails and its reverse. (They are the least structured.) The most disorderly possibilities are also the most likely, with 20 out of 32 possibilities for the 3 heads and 2 tails and its reverse. If we start with an orderly array like 5 heads and toss the coins, it is very likely that we will get a less orderly array as a result, since 30 out of the 32 possibilities are less orderly. So even if you start with an orderly state, there is a strong tendency to go from order to disorder, from low entropy to high entropy. The reverse can happen, but it is unlikely.

| Macrostate | Number of Microstates | |

|---|---|---|

| Heads | Tails | (W) |

| 100 | 0 | 1 |

| 99 | 1 | 100 |

| 95 | 5 | 7.5×107 |

| 90 | 10 | 1.7×1013 |

| 75 | 25 | 2.4×1023 |

| 60 | 40 | 1.4×1028 |

| 55 | 45 | 6.1×1028 |

| 51 | 49 | 9.9×1028 |

| 50 | 50 | 1.0×1029 |

| 49 | 51 | 9.9×1028 |

| 45 | 55 | 6.1×1028 |

| 40 | 60 | 1.4×1028 |

| 25 | 75 | 2.4×1023 |

| 10 | 90 | 1.7×1013 |

| 5 | 95 | 7.5×107 |

| 1 | 99 | 100 |

| 0 | 100 | 1 |

| Total: | 1.27×1030 |

This result becomes dramatic for larger systems. Consider what happens if you have 100 coins instead of just 5. The most orderly arrangements (most structured) are 100 heads or 100 tails. The least orderly (least structured) is that of 50 heads and 50 tails. There is only 1 way (1 microstate) to get the most orderly arrangement of 100 heads. There are 100 ways (100 microstates) to get the next most orderly arrangement of 99 heads and 1 tail (also 100 to get its reverse). And there are 1×1029waystoget50headsand50tails,theleastorderlyarrangement.Table\(15.7.2 is an abbreviated list of the various macrostates and the number of microstates for each macrostate. The total number of microstates—the total number of different ways 100 coins can be tossed—is an impressively large 1.27×1030. Now, if we start with an orderly macrostate like 100 heads and toss the coins, there is a virtual certainty that we will get a less orderly macrostate. If we keep tossing the coins, it is possible, but exceedingly unlikely, that we will ever get back to the most orderly macrostate. If you tossed the coins once each second, you could expect to get either 100 heads or 100 tails once in 2×102 years! This period is 1 trillion (1012 times longer than the age of the universe, and so the chances are essentially zero. In contrast, there is an 8% chance of getting 50 heads, a 73% chance of getting from 45 to 55 heads, and a 96% chance of getting from 40 to 60 heads. Disorder is highly likely.

Disorder in a Gas

The fantastic growth in the odds favoring disorder that we see in going from 5 to 100 coins continues as the number of entities in the system increases. Let us now imagine applying this approach to perhaps a small sample of gas. Because counting microstates and macrostates involves statistics, this is called statistical analysis. The macrostates of a gas correspond to its macroscopic properties, such as volume, temperature, and pressure; and its microstates correspond to the detailed description of the positions and velocities of its atoms. Even a small amount of gas has a huge number of atoms: 1.0cm3 of an ideal gas at 1.0 atm and 0oC has 2.7×1019 atoms. So each macrostate has an immense number of microstates. In plain language, this means that there are an immense number of ways in which the atoms in a gas can be arranged, while still having the same pressure, temperature, and so on.

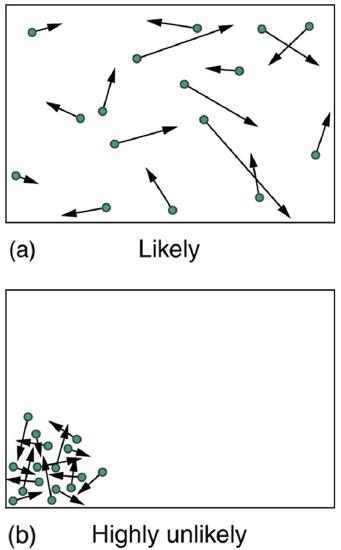

The most likely conditions (or macrostates) for a gas are those we see all the time—a random distribution of atoms in space with a Maxwell-Boltzmann distribution of speeds in random directions, as predicted by kinetic theory. This is the most disorderly and least structured condition we can imagine. In contrast, one type of very orderly and structured macrostate has all of the atoms in one corner of a container with identical velocities. There are very few ways to accomplish this (very few microstates corresponding to it), and so it is exceedingly unlikely ever to occur. (See Figure 15.7.2(b).) Indeed, it is so unlikely that we have a law saying that it is impossible, which has never been observed to be violated—the second law of thermodynamics.

The disordered condition is one of high entropy, and the ordered one has low entropy. With a transfer of energy from another system, we could force all of the atoms into one corner and have a local decrease in entropy, but at the cost of an overall increase in entropy of the universe. If the atoms start out in one corner, they will quickly disperse and become uniformly distributed and will never return to the orderly original state (Figure 15.7.2(b)). Entropy will increase. With such a large sample of atoms, it is possible—but unimaginably unlikely—for entropy to decrease. Disorder is vastly more likely than order.

The arguments that disorder and high entropy are the most probable states are quite convincing. The great Austrian physicist Ludwig Boltzmann (1844–1906)—who, along with Maxwell, made so many contributions to kinetic theory—proved that the entropy of a system in a given state (a macrostate) can be written as S=klnW, where k=1.38×10−23J/K is Boltzmann’s constant, and lnW is the natural logarithm of the number of microstates W corresponding to the given macrostate. W is proportional to the probability that the macrostate will occur. Thus entropy is directly related to the probability of a state—the more likely the state, the greater its entropy. Boltzmann proved that this expression for S is equivalent to the definition ΔS=Q/T which we have used extensively.

Thus the second law of thermodynamics is explained on a very basic level: entropy either remains the same or increases in every process. This phenomenon is due to the extraordinarily small probability of a decrease, based on the extraordinarily larger number of microstates in systems with greater entropy. Entropy can decrease, but for any macroscopic system, this outcome is so unlikely that it will never be observed.

Example 15.7.1: Entropy Increases in a Coin Toss

Suppose you toss 100 coins starting with 60 heads and 40 tails, and you get the most likely result, 50 heads and 50 tails. What is the change in entropy?

Strategy

Noting that the number of microstates is labeled W in Table 15.7.2 for the 100-coin toss, we can use ΔS=Sf−Si=klnWf−klnWi to calculate the change in entropy.

Solution

The change in entropy is ΔS=Sf−Si=klnWf−klnWi, where the subscript i stands for the initial 60 heads and 40 tails state, and the subscript f for the final 50 heads and 50 tails state. Substituting the values for W from Table 15.7.2 gives

ΔS=(1.38×10−23J/K)[ln(1.0×1029)−ln(1.4×1028)]=2.7×10−23J/K

Discussion

This increase in entropy means we have moved to a less orderly situation. It is not impossible for further tosses to produce the initial state of 60 heads and 40 tails, but it is less likely. There is about a 1 in 90 chance for that decrease in entropy (−2.7×10−23J/K) to occur. If we calculate the decrease in entropy to move to the most orderly state, we get ΔS=−92×10−23J/K. There is about 1 in 1030 chance of this change occurring. So while very small decreases in entropy are unlikely, slightly greater decreases are impossibly unlikely. These probabilities imply, again, that for a macroscopic system, a decrease in entropy is impossible. For example, for heat transfer to occur spontaneously from 1.00 kg of 0oC ice to its 0oC environment, there would be a decrease in entropy of 1.22×103J/K. Given that a ΔS of 10−21J/K corresponds to about a 1 in 1030 chance, a decrease of this size (103J/K) is an utter impossibility. Even for a milligram of melted ice to spontaneously refreeze is impossible.

PROBLEM-SOLVING STRATEGIES FOR ENTROPY

- Examine the situation to determine if entropy is involved.

- Identify the system of interest and draw a labeled diagram of the system showing energy flow.

- Identify exactly what needs to be determined in the problem (identify the unknowns). A written list is useful.

- Make a list of what is given or can be inferred from the problem as stated (identify the knowns). You must carefully identify the heat transfer, if any, and the temperature at which the process takes place. It is also important to identify the initial and final states.

- Solve the appropriate equation for the quantity to be determined (the unknown). Note that the change in entropy can be determined between any states by calculating it for a reversible process.

- Substitute the known value along with their units into the appropriate equation, and obtain numerical solutions complete with units.

- To see if it is reasonable: Does it make sense? For example, total entropy should increase for any real process or be constant for a reversible process. Disordered states should be more probable and have greater entropy than ordered states.

Summary

- Disorder is far more likely than order, which can be seen statistically.

- The entropy of a system in a given state (a macrostate) can be written as s=KLNw, where k=1.38×10−23J/K is Boltzmann’s constant, and lnW is the natural logarithm of the number of microstates W corresponding to the given macrostate.

Glossary

- macrostate

- an overall property of a system

- microstate

- each sequence within a larger macrostate

- statistical analysis

- using statistics to examine data, such as counting microstates and macrostates