10.2: Adiabatic Invariance

( \newcommand{\kernel}{\mathrm{null}\,}\)

One more application of the Hamiltonian formalism in classical mechanics is the solution of the following problem. 9 Earlier in the course, we already studied some effects of time variation of parameters of a single oscillator (Sec. 5.5) and coupled oscillators (Sec. 6.5). However, those discussions were focused on the case when the parameter variation speed is comparable with the own oscillation frequency (or frequencies) of the system. Another practically important case is when some system’s parameter (let us call it λ ) is changed much more slowly (adiabatically 10 ), |˙λλ|<<1T, where τ is a typical period of oscillations in the system. Let us consider a 1D system whose Hamiltonian H(q,p,λ) depends on time only via such a slow evolution of such parameter λ=λ(t), and whose initial energy restricts the system’s motion to a finite coordinate interval - see, e.g., Figure 3.2c.

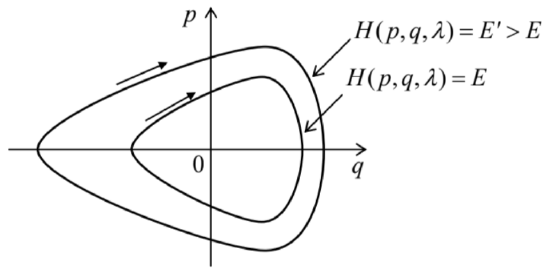

Then, as we know from Sec. 3.3, if the parameter λ is constant, the system performs a periodic (though not necessarily sinusoidal) motion back and forth the q-axis, or, in a different language, along a closed trajectory on the phase plane [q,p] - see Figure 1.11 According to Eq. (8), in this case H is constant along the trajectory. (To distinguish this particular value from the Hamiltonian function as such, I will call it E, implying that this constant coincides with the full mechanical energy E− as does for the Hamiltonian (10), though this assumption is not necessary for the calculation made below.)

The oscillation period T may be calculated as a contour integral along this closed trajectory: τ≡∫τ0dt=∮dtdqdq≡∮1˙qdq. Using the first of the Hamilton equations (7), we may represent this integral as τ=∮1∂H/∂pdq. At each given point q,H=E is a function of p alone, so that we may flip the partial derivative in the denominator just as the full derivative, and rewrite Eq. (30) as τ=∮∂p∂Edq. For the particular Hamiltonian (10), this relation is immediately reduced to Eq. (3.27), now in the form of a contour integral: τ=(mef2)1/2∮1[E−Uef(q)]1/2dq

Fig. 10.1. Phase-plane representation of periodic oscillations of a 1D Hamiltonian system, for two values of energy (schematically).

Naively, it may look that these formulas may be also used to find the motion period’s change when the parameter λ is being changed adiabatically, for example, by plugging the given functions mef(λ) and Uef(q,λ) into Eq. (32). However, there is no guarantee that the energy E in that integral would stay constant as the parameter changes, and indeed we will see below that this is not necessarily the case. Even more interestingly, in the most important case of the harmonic oscillator (Uef=κefq2/2), whose oscillation period τ does not depend on E (see Eq. (3.29) and its discussion), its variation in the adiabatic limit (28) may be readily predicted: τ(λ)=2π/ω0(λ)=2π[mef(λ)/κef(λ)]1/2, but the dependence of the oscillation energy E (and hence the oscillation amplitude) on λ is not immediately obvious.

In order to address this issue, let us use Eq. (8) (with E=H ) to represent the rate of the energy change with λ(t), i.e. in time, as dEdt=∂H∂t=∂H∂λdλdt. Since we are interested in a very slow (adiabatic) time evolution of energy, we can average Eq. (33) over fast oscillations in the system, for example over one oscillation period T, treating dλ/dt as a constant during this averaging. (This is the most critical point of this argumentation, because at any non- vanishing rate of parameter change the oscillations are, strictly speaking, non-periodic. 12 ) The averaging yields ¯dEdt=dλdt¯∂H∂λ≡dλdt1τ∫τ0∂H∂λdt. Transforming this time integral to the contour one, just as we did at the transition from Eq. (29) to Eq. (30), and then using Eq. (31) for τ, we get ¯dEdt=dλdt∮∂H/∂λ∂H/∂pdq∮∂p∂Edq At each point q of the contour, H is a function of not only λ, but also of p, which may be also λ dependent, so that if E is fixed, the partial differentiation of the relation E=H over λ yields ∂H∂λ+∂H∂p∂p∂λ=0, i.e. ∂H/∂λ∂H/∂p=−∂p∂λ. Plugging the last relation to Eq.(35), we get ¯dEdt=−dλdt∮∂p∂λdq∮∂p∂Edq Since the left-hand side of Eq. (37) and the derivative dλ/dt do not depend on q, we may move them into the integrals over q as constants, and rewrite Eq. (37) as ∮(∂p∂E¯dEdt+∂p∂λdλdt)dq=0. Now let us consider the following integral over the same phase-plane contour, J≡12π∮pdq, called the action variable. Just to understand its physical sense, let us calculate J for a harmonic oscillator (14). As we know very well from Chapter 5, for such an oscillator, q=AcosΨ,p=− mef ω0AsinΨ (with Ψ=ω0t+ const), so that J may be easily expressed either via the oscillations’ amplitude A, or via their energy E=H=mefω20A2/2 : J=12π∮pdq=12π∫Ψ=2πΨ=0(−mefω0AsinΨ)d(AcosΨ)=mefω02A2=Eω0. Returning to a general system with adiabatically changed parameter λ, let us use the definition of J, Eq. (39), to calculate its time derivative, again taking into account that at each point q of the trajectory, p is a function of E and λ : dJdt=12π∮dpdtdq=12π∮(∂p∂EdEdt+∂p∂λdλdt)dq. Within the accuracy of our approximation, in which the contour integrals (38) and (41) are calculated along a closed trajectory, the factor dE/dt is indistinguishable from its time average, and these integrals coincide so that the result (38) is applicable to Eq. (41) as well. Hence, we have finally arrived at a very important result: at a slow parameter variation, dJ/dt=0, i.e. the action variable remains constant: J= const This is the famous adiabatic invariance. 13 In particular, according to Eq. (40), in a harmonic oscillator, the energy of oscillations changes proportionately to its own (slowly changed) frequency.Before moving on, let me briefly note that the adiabatic invariance is not the only application of the action variable J. Since the initial choice of generalized coordinates and velocities (and hence the generalized momenta) in analytical mechanics is arbitrary (see Sec. 2.1), it is almost evident that J may be taken for a new generalized momentum corresponding to a certain new generalized coordinate Θ,14 and that the pair {J,Θ} should satisfy the Hamilton equations (7), in particular, dΘdt=∂H∂J. Following the commitment of Sec. 1 (made there for the "old" arguments qj,pj ), before the differentiation on the right-hand side of Eq. (43), H should be expressed as a function (besides t ) of the "new" arguments J and Θ. For time-independent Hamiltonian systems, H is uniquely defined by J− see, e.g., Eq. (40). Hence in this case the right-hand side of Eq. (43) does not depend on either t or Θ, so that according to that equation, Θ (called the angle variable) is a linear function of time: Θ=∂H∂Jt+ const . For a harmonic oscillator, according to Eq. (40), the derivative ∂H/∂J=∂E/∂J is just ω0≡2π/T, so that Θ=ω0t+ const, i.e. it is just the full phase Ψ that was repeatedly used in this course − especially in Chapter 5 . It may be shown that a more general form of this relation, ∂H∂J=2πτ, is valid for an arbitrary system described by Eq. (10). Thus, Eq. (44) becomes Θ=2πtτ+ const . This means that for an arbitrary (nonlinear) 1D oscillator, the angle variable Θ is a convenient generalization of the full phase Ψ. Due to this reason, the variables J and Θ present a convenient tool for discussion of certain fine points of the dynamics of strongly nonlinear oscillators - for whose discussion I, unfortunately, do not have time/space. 15

9 Various aspects of this problem and its quantum-mechanical extensions were first discussed by L. Le Cornu (1895), Lord Rayleigh (1902), H. Lorentz (1911), P. Ehrenfest (1916), and M. Born and V. Fock (1928).

10 This term is also used in thermodynamics and statistical mechanics, where it implies not only a slow parameter variation (if any) but also thermal insulation of the system - see, e.g., SM Sec. 1.3. Evidently, the latter condition is irrelevant in our current context.

11 In Sec. 5.6, we discussed this plane for the particular case of sinusoidal oscillations - see Figure 5.9

12 Because of the implied nature of this conjecture (which is very close to the assumptions made at the derivation of the reduced equations in Sec. 5.3), new, more strict (but also much more cumbersome) proofs of the final Eq. (42) are still being offered in literature - see, e.g., C. Wells and S. Siklos, Eur. J. Phys. 28, 105 (2007) and/or A. Lobo et al., Eur. J. Phys. 33, 1063 (2012).

13 For certain particular oscillators, e.g., a point pendulum, Eq. (42) may be also proved directly - an exercise highly recommended to the reader.

14 This, again, is a plausible argument but not a strict proof. Indeed: though, according to its definition (39), J is nothing more than a sum of several (formally, the infinite number of) values of the momentum p, they are not independent, but have to be selected on the same closed trajectory on the phase plane. For more mathematical vigor, the reader is referred to Sec. 45 of Mechanics by Landau and Lifshitz (which was repeatedly cited above), which discusses the general rules of the so-called canonical transformations from one set of Hamiltonian arguments to another one - say from {p,q} to {J,Θ}.

15 An interested reader may be referred, for example, to Chapter 6 in J. Jose and E. Saletan, Classical Dynamics, Cambridge U. Press, 1998.