9.2: Free Energy of the One-Dimensional Ising Model

( \newcommand{\kernel}{\mathrm{null}\,}\)

The N-spin one-dimensional Ising model consists of a horizontal chain of spins, s1, s2, . . . , sN, where si = ±1.

A vertical magnetic field H is applied, and only nearest neighbor spins interact, so the Hamiltonian is

HN=−JN−1∑i=1sisi+1−mHN∑i=1si.

For this system the partition function is

ZN=∑ states e−βHN=∑s1=±1∑s2=±1⋯∑sN=±1eK∑N−1i=1sisi+1+L∑Ni=1si,

where

K≡JkBT and L≡mHkBT.

If J = 0, (the ideal paramagnet) the partition function factorizes, and the problem is easily solved using the “summing Hamiltonian yields factorizing partition function” theorem. If H = 0, the partition function nearly factorizes, and the problem is not too difficult. (See problem 9.3.) But in general, there is no factorization.

We will solve the problem using induction on the size of the system. If we add one more spin (spin number N + 1), then the change in the system’s energy depends only upon the state of the new spin and of the previous spin (spin number N). Define Z↑N as, not the sum over all states, but the sum over all states in which the last (i.e. Nth) spin is up, and define Z↓N as the sum over all states in which the last spin is down, so that

ZN=Z↑N+Z↓N.

Now, if one more spin is added, the extra term in e−βH results in a factor of

eKsNsN+1+LsN+1.

From this, it is very easy to see that

Z↑N+1=Z↑NeK+L+Z↓Ne−K+L

Z↓N+1=Z↑Ne−K−L+Z↓NeK−L.

This is really the end of the physics of this derivation. The rest is mathematics.

So put on your mathematical hats and look at the pair of equations above. What do you see? A matrix equation!

(Z↑N+1Z↓N+1)=(eK+Le−K+Le−K−LeK−L)(Z↑NZ↓N).

We introduce the notation

wN+1=TwN

for the matrix equation. The 2 × 2 matrix T, which acts to add one more spin to the chain, is called the transfer matrix. Of course, the entire chain can be built by applying T repeatedly to an initial chain of one site, i.e. that

wN+1=TNw1,

where

w1=(eLe−L).

The fact that we are raising a matrix to a power suggests that we should diagonalize it. The transfer matrix T has eigenvalues λA and λB (labeled so that |λA| > |λB|) and corresponding eigenvectors xA and xB. Like any other vector, w1 can be expanded in terms of the eigenvectors

w1=cAxA+cBxB

and in this form it is very easy to see what happens when w1 is multiplied by T N times:

wN+1=TNw1=cA⊤NxA+cBTNxB

=cAλNAxA+cBλNBxB.

So the partition function is

ZN+1=Z↑N+1+Z↓N+1=cAλNA(x↑A+x↓A)+cBλNB(x↑B+x↓B).

By diagonalizing matrix T (that is, by finding both its eigenvalues and its eigenvectors) we could find every element in the right hand side of the above equation, and hence we could find the partition function ZN for any N. But of course we are really interested only in the thermodynamic limit N → ∞. Because |λA| > |λB|, λNA dominates λNB in the thermodynamic limit, and

ZN+1≈cAλNA(x↑A+x↓A)

provided that cA(x↑A+x↓A)≠0. Now,

FN+1=−kBTlnZN+1≈−kBTNlnλA−kBTln[cA(x↑A+x↓A)],

and this approximation becomes exact in the thermodynamic limit. Thus the free energy per spin is

f(K,L)=limN→∞FN+1(K,L)N+1=−kBTlnλA.

So to find the free energy we only need to find the larger eigenvalue of T: we don’t need to find the smaller eigenvalue, and we don’t need to find the eigenvectors!

It is a simple matter to find the eigenvalues of our transfer matrix T. They are the two roots of

det(eK+L−λe−K+Le−K−LeK−L−λ)=0

(λ−eK+L)(λ−eK−L)−e−2K=0

λ2−2eKcoshLλ+e2K−e−2K=0,

which are

λ=eK[coshL±√cosh2L−1+e−4K].

It is clear that both eigenvalues are real, and that the larger one is positive, so

λA=eK[coshL+√sinh2L+e−4K].

Finally, using equation (9.17), we find the free energy per spin

f(T,H)=−J−kBTln[coshmHkBT+√sinh2mHkBT+e−4J/kBT].

Results

Knowing the free energy, we can take derivatives to find any thermodynamic quantity (see problem 9.2). Here I’ll sketch and discuss the results obtained through those derivatives.

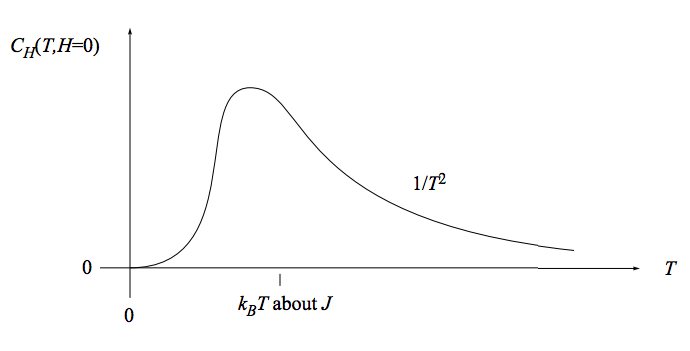

The heat capacity at constant, vanishing, magnetic field is sketched here:

Do you think an experimentalist needs a magnet to probe magnetic phenomena? This graph shows that a magnet is not required: The magnetic effects result in a bump in the heat capacity near kBT = J, even when no magnetic field is applied.

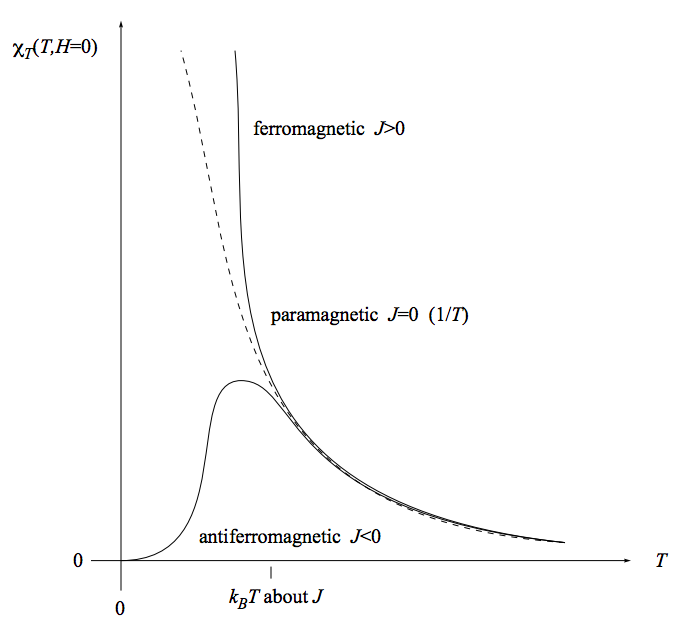

The magnetic susceptibility is sketched here:

We have already seen that for independent spins (paramagnet, J = 0) the susceptibility falls like 1/T with temperature (the “Curie law”). Interacting spins at high temperature (kBT≫J) behave approximately the same way. But at low temperatures, the susceptibility for a ferromagnet exceeds the susceptibility for a paramagnet, while the susceptibility for a antiferromagnet undershoots the susceptibility for a paramagnet. This makes sense: For a paramagnet, the external magnetic field is inducing the spins to align. For a ferromagnet, both the external magnetic field and the tendency of neighboring spins to align are inducing the spins to align. For an antiferromagnet, the external magnetic field is inducing the spins to align, but the tendency of neighboring spins to antialign is opposing that inducement.

Aligning the spins in a paramagnet is like herding cats: the individual spins are independent and don’t naturally take to pointing all in the same direction. Aligning the spins in a ferromagnet is like herding cows: the individual spins want to all go in the same direction and don’t care which direction it is. Aligning the spins in an antiferromagnet is like herding siblings in a dysfunctional family, where each sibling says “I want to go to the opposite of wherever my brother/sister wants to go.”