7.3: Open System Dynamics- Dephasing

( \newcommand{\kernel}{\mathrm{null}\,}\)

So far we have discussed the density operator as something given at a particular time instant. Now let us discuss how is it formed, i.e. its evolution in time, starting from the simplest case when the probabilities Wj participating in Eq. (15) are time-independent-by this or that reason, to be discussed in a moment. In this case, in the Schrödinger picture, we may rewrite Eq. (15) as ˆw(t)=∑j|wj(t)⟩Wj⟨wj(t)|. Taking a time derivative of both sides of this equation, multiplying them by iℏ, and applying Eq. (4.158) to the basis states wj, with the account of the fact that the Hamiltonian operator is Hermitian, we get iℏ˙ˆw=iℏ∑j(|˙wj(t)⟩Wj⟨wj(t)|+|wj(t)⟩Wj⟨˙wj(t)|)=∑j(ˆH|wj(t)⟩Wj⟨wj(t)|−|wj(t)⟩Wj⟨wj(t)|ˆH)≡ˆH∑j|wj(t)⟩Wj⟨wj(t)|−∑j|wj(t)⟩Wj⟨wj(t)|ˆH Now using Eq. (64) again (twice), we get the so-called von Neumann equation 22 iℏ˙ˆw=[ˆH,ˆw]. Note that this equation is similar in structure to Eq. (4.199) describing the time evolution of timeindependent operators in the Heisenberg picture operators: iℏ˙ˆA=[ˆA,ˆH] besides the opposite order of the operators in the commutator - equivalent to the change of sign of the right-hand side. This should not be too surprising, because Eq. (66) belongs to the Schrödinger picture of quantum dynamics, while Eq. (67), to its Heisenberg picture.

The most important case when the von Neumann equation is (approximately) valid is when the "own" Hamiltonian ˆHs of the system s of our interest is time-independent, and its interaction with the environment is so small that its effect on the system’s evolution during the considered time interval is negligible, but it had lasted so long that it gradually put the system into a non-pure state - for example, but not necessarily, into the classical mixture (24). 23 (This is an example of the second case discussed in Sec. 1, when we need the mixed-ensemble description of the system even if its current interaction with the environment is negligible.) If the interaction with the environment is stronger, and hence is not negligible at the considered time interval, Eq. (66) is generally not valid, 24 because the probabilities Wj may change in time. However, this equation may still be used for a discussion of one major effect of the environment, namely dephasing (also called "decoherence"), within a simple model.Let us start with the following general model a system interacting with its environment, which will be used throughout this chapter: ˆH=ˆHs+ˆHe{λ}+ˆHint where {λ} denotes the (huge) set of degrees of freedom of the environment. 25 Evidently, this model is useful only if we may somehow tame the enormous size of the Hilbert space of these degrees of freedom, and so work out the calculations all way to a practicably simple result. This turns out to be possible mostly if the elementary act of interaction of the system and its environment is in some sense small. Below, I will describe several cases when this is true; the classical example is the Brownian particle interacting with the molecules of the surrounding gas or fluid. 26 (In this example, a single hit by a molecule changes the particle’s momentum by a minor fraction.) On the other hand, the model (68) is not very productive for a particle interacting with the environment consisting of similar particles, when a single collision may change its momentum dramatically. In such cases, the methods discussed in the next chapter are more relevant.

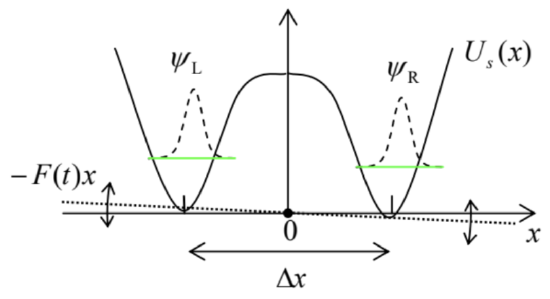

Now let us analyze a very simple model of an open two-level quantum system, with its intrinsic Hamiltonian having the form ˆHs=czˆσz, similar to the Pauli Hamiltonian (4.163), 27 and a factorable, bilinear interaction - cf. Eq. (6.145) and its discussion: ˆHint =ˆf{λ}ˆσz, where ˆf is a Hermitian operator depending only on the set {λ} of environmental degrees of freedom ("coordinates"), defined in their Hilbert space - different from that of the two-level system. As a result, the operators ˆf{λ} and ˆHe{λ} commute with ˆσz - and with any other intrinsic operator of the two-level system. Of course, any realistic ˆHe{λ} is extremely complex, so that how much we will be able to achieve without specifying it, may be a pleasant surprise for the reader.Before we proceed to the analysis, let us recognize two examples of two-level systems that may be described by this model. The first example is a spin- 1/2 in an external magnetic field of a fixed direction (taken for the axis z ), which includes both an average component ¯B and a random (fluctuating) component ~Bz(t) induced by the environment. As it follows from Eq. (4.163b), it may be described by the Hamiltonian (68)-(70) with cz=−ℏγ2¯Bz, and ˆf=−ℏγ2⏞˜Bz(t). Another example is a particle in a symmetric double-well potential Us (Fig. 4), with a barrier between them sufficiently high to be practically impenetrable, and an additional force F(t), exerted by the environment, so that the total potential energy is U(x,t)=Us(x)−F(t)x. If the force, including its static part ˉF and fluctuations ˜F(t), is sufficiently weak, we can neglect its effects on the shape of potential wells and hence on the localized wavefunctions ψL,R, so that the force effect is reduced to the variation of the difference EL−ER=F(t)Δx between the eigenenergies. As a result, the system may be described by Eqs. (68)-(70) with cz=−ˉFΔx/2;ˆf=−ˆ˜F(t)Δx/2.

Fig. 7.4. Dephasing in a double-well system.

Fig. 7.4. Dephasing in a double-well system.Let us start our general analysis of the model described by Eqs. (68)-(70) by writing the equation of motion for the Heisenberg operator ˆσz(t) : iℏ˙ˆσz=[ˆσz,ˆH]=(cz+ˆf)[ˆσz,ˆσz]=0, showing that in our simple model (68)-(70), the operator ˆσz does not evolve in time. What does this mean for the observables? For an arbitrary density matrix of any two-level system, w=(w11w12w21w22), we can readily calculate the trace of operator ˆσzˆw. Indeed, since the operator traces are basisindependent, we can do this in any basis, in particular in the usual z-basis: Tr(ˆσzˆw)=Tr(σzw)=Tr[(100−1)(w11w12w21w22)]=w11−w22=W1−W2. Since, according to Eq. (5), ˆσz may be considered the operator for the difference of the number of particles in the basis states 1 and 2 , in the case (73) the difference W1−W2 does not depend on time, and since the sum of these probabilities is also fixed, W1+W2=1, both of them are constant. The physics of this simple result is especially clear for the model shown in Fig. 4: since the potential barrier separating the potential wells is so high that tunneling through it is negligible, the interaction with the environment cannot move the system from one well into another one.

It may look like nothing interesting may happen in such a simple situation, but in a minute we will see that this is not true. Due to the time independence of W1 and W2, we may use the von Neumann equation (66) to describe the density matrix evolution. In the usual z-basis: iℏ˙w≡iℏ(˙w11˙w12˙w21˙w22)=[H,w]≡(cz+ˆf)[σz,w]≡(cz+ˆf)[(100−1),(w11w12w21w22)]=(cz+ˆf)(02w12−2w210). This result means that while the diagonal elements, i.e., the probabilities of the states, do not evolve in time (as we already know), the off-diagonal elements do change; for example, iℏ˙w12=2(cz+ˆf)w12, with a similar but complex-conjugate equation for w21. The solution of this linear differential equation (77) is straightforward, and yields w12(t)=w12(0)exp{−i2czℏt}exp{−i2ℏ∫t0ˆf(t′)dt′}. The first exponent is a deterministic c-number factor, while in the second one ˆf(t)≡ˆf{λ(t)} is still an operator in the Hilbert space of the environment, but from the point of view of the two-level system of our interest, it is a random function of time. The time-average part of this function may be included in cz, so in what follows, we will assume that it equals zero.

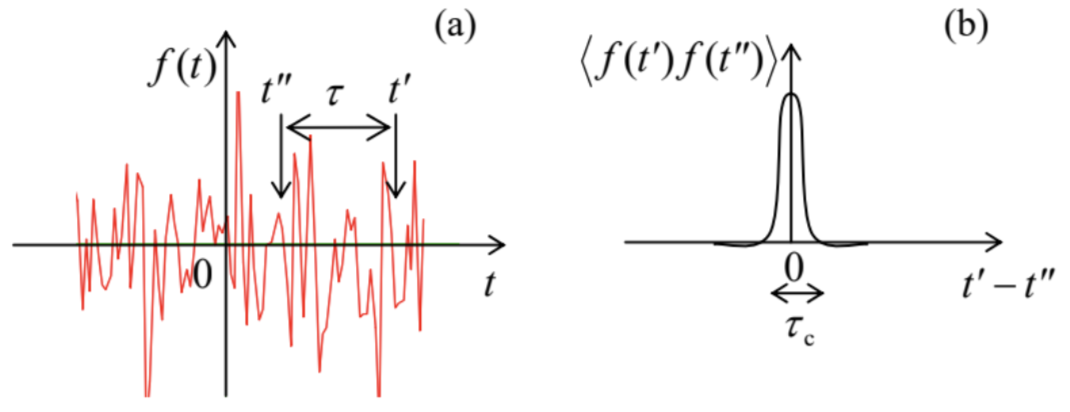

Let us start from the limit when the environment behaves classically. 28 In this case, the operator in Eq. (78) may be considered as a classical random function of time f(t), provided that we average its effects over a statistical ensemble of many functions f(t) describing many (macroscopically similar) experiments. For a small time interval t=dt→0, we can use the Taylor expansion of the exponent, truncating it after the quadratic term: ⟨exp{−i2ℏ∫dt0f(t′)dt′}≈1+⟨−i2ℏ∫dt0f(t′)dt′⟩+⟨12(−i2ℏ∫dt0f(t′)dt′)(−i2ℏ∫dt0f(t′′)dt′′)⟩≡1−i2ℏ∫dt0⟨f(t′)⟩dt′−2ℏ2∫dt0dt′∫dt0dt′′⟨f(t′)f(t′′)⟩≡1−2ℏ2∫dt0dtdt∫dt0dt′′Kf(t′−t′′). Here we have used the facts that the statistical average of f(t) is equal to zero, while the second average, called the correlation function, in a statistically- (i.e. macroscopically-) stationary state of any environment may only depend on the time difference τ≡t′−t′′ : ⟨f(t′)f(t′′)⟩=Kf(t′−t′′)≡Kf(τ). If this difference is much larger than some time scale τc, called the correlation time of the environment, the values f(t′) and f(t′′) are completely independent (uncorrelated), as illustrated in Fig. 5a, so that at τ →∞, the correlation function has to tend to zero. On the other hand, at τ=0, i.e. t ’ =t ", the correlation function is just the variance of f :

Kf(0)=⟨f2⟩, and has to be positive. As a result, the function looks (semi-quantitatively) as shown in Fig. 5 b.

Fig. 7.5. (a) A typical random process and (b) its correlation function - schematically.

Fig. 7.5. (a) A typical random process and (b) its correlation function - schematically.Hence, if we are only interested in time differences τ much longer than τc, which is typically very short, we may approximate Kf(τ) well with a delta function of the time difference. Let us take it in the following form, convenient for later discussion: Kf(τ)≈ℏ2Dφδ(τ), where Dφ is a positive constant called the phase diffusion coefficient. The origin of this term stems from the very similar effect of classical diffusion of Brownian particles in a highly viscous medium. Indeed, the particle’s velocity in such a medium is approximately proportional to the external force. Hence, if the random hits of a particle by the medium’s molecules may be described by a force that obeys a law similar to Eq. (82), the velocity (along any Cartesian coordinate) is also delta-correlated: ⟨v(t)⟩=0,⟨v(t′)v(t′′)⟩=2Dδ(t′−t′′). Now we can integrate the kinematic relation ˙x=v, to calculate particle’s displacement from its initial position during a time interval [0,t] and its variance: x(t)−x(0)=∫t0v(t′)dt′,⟨(x(t)−x(0))2⟩=⟨∫t0v(t′)dtt∫t0v(t′′)dt′′⟩=∫t0dtt∫t0dt′′⟨v(t′)v(t′′)⟩=∫t0dt′∫t0dt′′2Dδ(t′−t′′)=2Dt. This is the famous law of diffusion, showing that the r.m.s. deviation of the particle from the initial point grows with time as (2Dt)1/2, where the constant D is called the diffusion coefficient.

Returning to the diffusion of the quantum-mechanical phase, with Eq. (82) the last double integral in Eq. (79) yields ℏ2Dφdt, so that the statistical average of Eq. (78) is ⟨w12(dt)⟩=w12(0)exp{−i2czℏdt}(1−2Dφdt). Applying this formula to sequential time intervals, ⟨w12(2dt)⟩=⟨w12(dt)⟩exp{−i2czℏdt}(1−2Dφdt)=w12(0)exp{−i2czℏ2dt}(1−2Dφdt)2, etc., for a finite time t=Ndt, in the limit N→∞ and dt→0 (at fixed t ) we get ⟨w12(t)⟩=w12(0)exp{−i2czℏt}×limN→∞(1−2Dφt1N)N. By the definition of the natural logarithm base e,29 this limit is just exp{−2Dφt}, so that, finally: ⟨w12(t)⟩=w12(0)exp{−i2aℏt}exp{−2Dφt}≡w12(0)exp{−i2aℏt}exp{−tT2}. So, due to coupling to the environment, the off-diagonal elements of the density matrix decay with some dephasing time T2=1/2Dφ, providing a natural evolution from the density matrix (22) of a pure state to the diagonal matrix (23), with the same probabilities W1,2, describing a fully dephased (incoherent) classical mixture. 30

This simple model offers a very clear look at the nature of the decoherence: the random "force" f(t), exerted by the environment, "shakes" the energy difference between two eigenstates of the system and hence the instantaneous velocity 2(cz+f)/ℏ of their mutual phase shift φ(t)− cf. Eq. (22). Due to the randomness of the force, φ(t) performs a random walk around the trigonometric circle, so that the average of its trigonometric functions exp{±iφ} over time gradually tends to zero, killing the offdiagonal elements of the density matrix. Our analysis, however, has left open two important issues:

(i) Is this approach valid for a quantum description of a typical environment?

(ii) If yes, what is physically the Dφ that was formally defined by Eq. (82)?

22 In some texts, it is called the "Liouville equation", due to its philosophical proximity to the classical Liouville theorem for the classical distribution function wcl(X,P) - see, e.g., SM Sec. 6.1 and in particular Eq. (6.5).

23 In the last case, the statistical operator is diagonal in the stationary state basis and hence commutes with the Hamiltonian. Hence the right-hand side of Eq. (66) vanishes, and it shows that in this basis, the density matrix is completely time-independent.

24 Very unfortunately, this fact is not explained in some textbooks, which quote the von Neumann equation without proper qualifications.

25 Note that by writing Eq. (68), we are treating the whole system, including the environment, as a Hamiltonian one. This can always be done if the accounted part of the environment is large enough so that the processes in the system s of our interest do not depend on the type of boundary between this part and the "external" (even larger) environment; in particular, we may assume the total system to be closed, i.e. Hamiltonian.

26 The theory of the Brownian motion, the effect first observed experimentally by biologist Robert Brown in the 1820 s, was pioneered by Albert Einstein in 1905 and developed in detail by Marian Smoluchowski in 1906-1907 and Adriaan Fokker in 1913. Due to this historic background, in some older texts, the approach described in the balance of this chapter is called the "quantum theory of the Brownian motion". Let me, however, emphasize that due to the later progress of experimental techniques, quantum-mechanical behaviors, including the environmental effects in them, have been observed in a rapidly growing number of various quasi-macroscopic systems, for which this approach is quite applicable. In particular, this is true for most systems being explored as possible qubits of prospective quantum computing and encryption systems - see Sec. 8.5 below.

27 As we know from Secs. 4.6 and 5.1, such Hamiltonian is sufficient to lift the energy level degeneracy.

28 This assumption is not in contradiction with the need for the quantum treatment of the two-level system s, because a typical environment is large, and hence has a very dense energy spectrum, with the distances adjacent levels that may be readily bridged by thermal excitations of small energies, often making it essentially classical.

29 See, e.g., MA Eq. (1.2a) with n=−N/2D∅t.

30 Note that this result is valid only if the approximation (82) may be applied at time interval dt which, in turn, should be much smaller than the T2 in Eq. (88), i.e. if the dephasing time is much longer than the environment’s correlation time τc. This requirement may be always satisfied by making the coupling to the environment sufficiently weak. In addition, in typical environments, τc is very short. For example, in the original Brownian motion experiments with a-few- μm pollen grains in water, it is of the order of the average interval between sequential molecular impacts, of the order of 10−21 s.